Impression source: Getty Photographs

Technical Search engine optimization calls for the two marketing and advancement techniques, but you can accomplish a great deal without the need of becoming a programmer. Let us go through the steps.

When you invest in a new auto, you only will need to press a button to flip it on. If it breaks, you get it to a garage, and they take care of it.

With website repair, it’s not fairly so basic, since you can remedy a difficulty in numerous methods. And a web site can get the job done perfectly fine for end users and be poor for Seo. That is why we have to have to go underneath the hood of your website and glance at some of its tech facets.

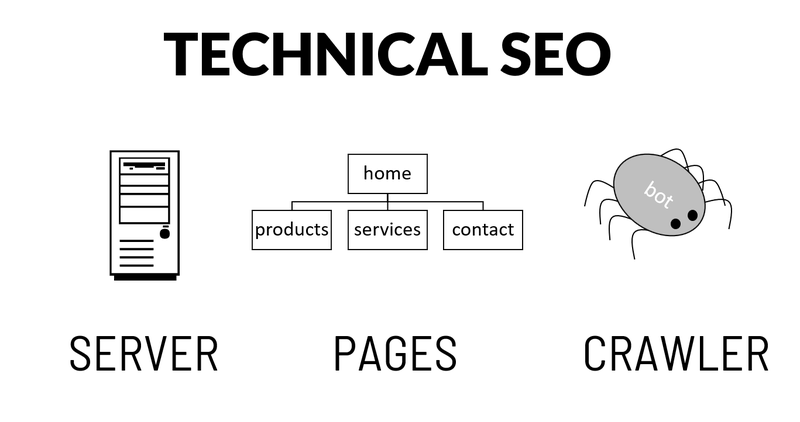

Technological Search engine optimisation addresses server-linked, website page-linked, and crawler troubles. Graphic supply: Writer

Overview: What is technological Website positioning?

Complex Web optimization refers to optimizing your website architecture for look for engines, so they can optimally index and rating your content. The architecture pillar is the foundation of your Website positioning and often the 1st stage in any Web optimization job.

It appears geeky and difficult, but it is just not rocket science. But to fulfill all the Search engine optimization requirements, you may have to get your palms dirty or get in touch with in your webmaster through the process.

What comprises specialized Search engine marketing?

Complex Search engine optimization includes three regions: server-associated optimizations, site-related optimizations, and crawl-associated concerns. In the following, you will uncover a technical Web optimization checklist together with necessary factors to enhance for a smaller website.

1. Server-related optimizations

A lookup engine’s primary objective is to deliver a terrific person working experience. They enhance their algorithms to mail buyers to terrific internet sites that answer their thoughts. You want the same for your consumers, so you have the same goal. We are going to glimpse at the subsequent server-linked settings:

- Domain identify and server settings

- Protected server settings

- Major area name configuration

2. Website page-connected optimizations

Your web site is hosted on the server and is made up of pages. The second portion we are likely to search at for technological Seo is composed of problems associated to the pages on your web site:

- URL optimizations

- Language options

- Canonical configurations

- Navigation menus

- Structured info

3. Crawl-relevant challenges

When server and webpage difficulties are corrected, research engine crawlers may possibly nonetheless run into obstacles. The third part we appear at is related to crawling the web-site:

- Robots.txt guidance

- Redirections

- Damaged one-way links

- Site load speed

How to make improvements to your website’s specialized Web optimization

The most effective way to enhance Website positioning site architecture is to use an Search engine marketing software termed a web page crawler, or a “spider,” which moves by the web from hyperlink to backlink. An Web optimization spider can simulate the way lookup engines crawl. But initial, we have some preparation function to tackle.

1. Deal with domain title and server options

Ahead of we can crawl, we need to have to outline and delimit the scope by modifying server configurations. If your web site is on a subdomain of a company (for instance, WordPress, Wix, or Shopify) and you don’t have your possess domain identify, this is the time to get one.

Also, if you haven’t configured a safe server certification, acknowledged as an SSL (safe sockets layer), do that way too. Research engines care about the protection and trustworthiness of your web site. They favor secure web sites. Both equally area names and SSL certificates are very low expense and substantial worth for your Search engine optimisation.

You need to determine what version of your area title you want to use. Lots of individuals use www in entrance of their domain name, which just suggests “planet huge world-wide-web.” Or you can pick out to use the shorter variation without the need of the www, simply just domain.com.

Redirect lookups from the variation you are not employing to the just one you are, which we phone the most important area. In this way, you can stay away from replicate content material and ship dependable alerts to the two customers and research engine crawlers.

The exact goes for the protected variation of your website. Make positive you are redirecting the http variation to the https variation of your major domain identify.

2. Verify robots.txt options

Make this compact verify to verify your domain is crawlable. Sort this URL into your browser:

yourdomain.com/robots.txt

If the file you see uses the phrase disallow, then you happen to be almost certainly blocking crawler entry to pieces of your web page, or probably all of it. Check with the website developer to fully grasp why they are doing this.

The robots.txt file is a commonly acknowledged protocol for regulating crawler entry to sites. It is really a text file put at the root of your domain, and it instructs crawlers on what they are permitted to access.

Robots.txt is mainly employed to stop entry to selected web pages or whole world wide web servers, but by default, every little thing on your web site is obtainable to everyone who appreciates the URL.

The file gives principally two styles of details: user agent data and let or disallow statements. A user agent is the identify of the crawler, such as Googlebot, Bingbot, Baiduspider, or Yandex Bot, but most normally you will basically see a star image, *, which means that the directive applies to all crawlers.

You can disallow spider access to delicate or copy details on your website. The disallow directive is also extensively applied when a web site is beneath growth. Often developers forget about to take out it when the website goes dwell. Make sure you only disallow internet pages or directories of your web site which really shouldn’t be indexed by search engines.

3. Test for duplicates and worthless webpages

Now let us do a sanity verify and a cleanup of your site’s indexation. Go to Google and kind the next command: web-site:yourdomain.com. Google will exhibit you all the webpages which have been crawled and indexed from your web page.

If your web site isn’t going to have several pages, scroll as a result of the listing and notice the URLs which are inconsistent. Look for the following:

- Mentions from Google saying that specified internet pages are very similar and thus not demonstrated in the benefits

- Webpages which should not clearly show because they carry no price to buyers: admin pages, pagination

- Numerous web pages with essentially the identical title and articles

If you aren’t guaranteed if a webpage is practical or not, look at your analytics application beneath landing internet pages, to see if the internet pages acquire any visitors at all. If it would not glimpse ideal and generates no traffic, it may perhaps be most effective to get rid of it and let other web pages surface area alternatively.

If you have numerous circumstances of the previously mentioned, or the final results embarrass you, take away them. In several scenarios, look for engines are fantastic judges of what should really rank and what shouldn’t, so don’t expend as well considerably time on this.

Use the next strategies to take out webpages from the index but preserve them on the internet site.

The canonical tag

For duplicates and in the vicinity of-duplicates, use the canonical tag on the replicate pages to suggest they are in essence the very same, and the other web page need to be indexed.

Insert this line in the

portion of the site:

If you are utilizing a CMS, canonical tags can frequently be coded into the internet site and created immediately.

The noindex tag

For webpages that are not duplicates but shouldn’t surface in the index, use the noindex tag to eliminate them. It’s a meta tag that goes into the

portion of the webpage:

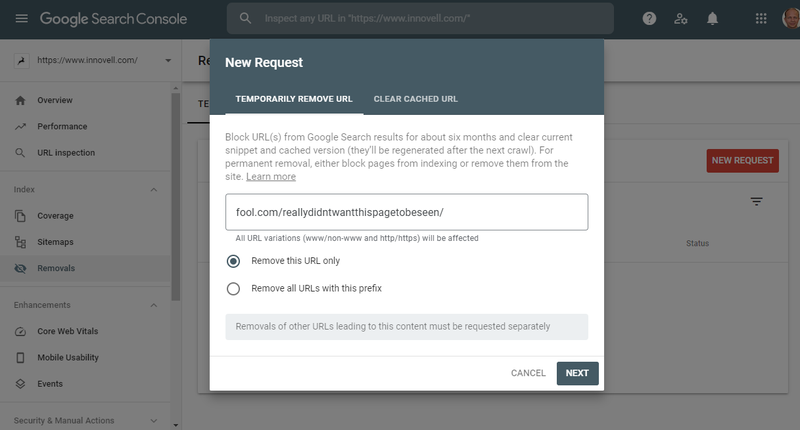

The URL elimination device

Canonical tags and noindex tags are highly regarded by all search engines. Every of them also has numerous possibilities in their webmaster resources. In Google Search Console, it is achievable to eliminate internet pages from the index. Removal is non permanent even though the other strategies are using outcome.

You can promptly take out URLs from Google’s index with the removing instrument, but this system is momentary. Graphic source: Author

The robots.txt file

Be thorough using the disallow command in the robots.txt file. This will only inform the crawler it are unable to visit the web site and not to get rid of it from the index. Use it when you have cleared all incriminating URLs from the index.

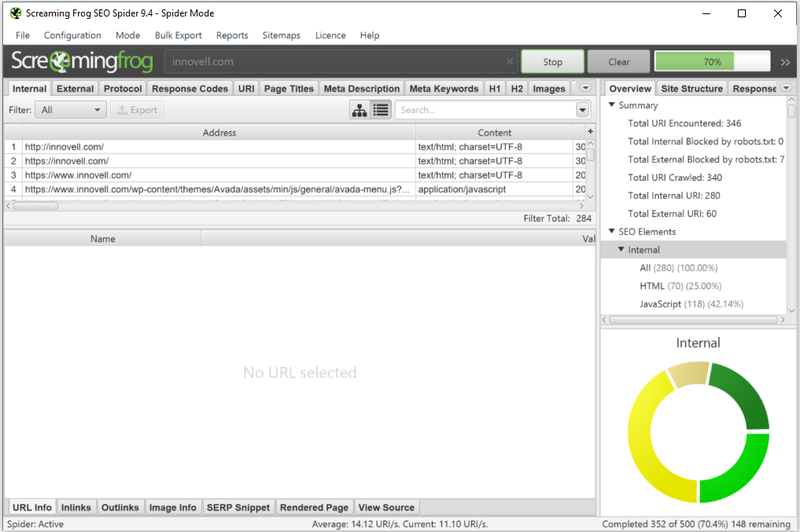

4. Crawl your internet site like a lookup motor

For the remainder of this technical Search engine optimization operate-by means of, the most efficient next phase is to use an Search engine optimization resource to crawl your web-site by means of the principal domain you configured. This will also help deal with website page-relevant difficulties.

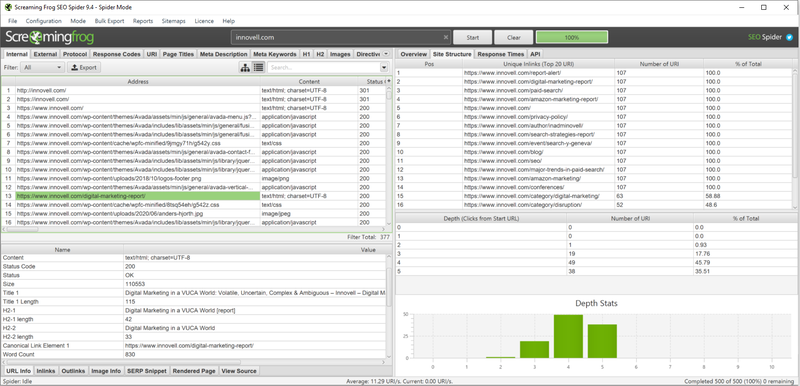

In the pursuing, we use Screaming Frog, web-site crawl application that is absolutely free for up to 500 URLs. Ideally, you will have cleaned your website with URL removals by way of canonical and noindex tags in stage 3 before you crawl your website, but it is just not mandatory.

Screaming Frog is web page crawler software program, free to use for up to 500 URLs. Graphic supply: Writer

5. Confirm the scope and depth of crawl

Your initial crawl look at is to validate that all your webpages are currently being indexed. Immediately after the crawl, the spider will display how many web pages it observed and how deep it crawled. Inside linking can enable crawlers entry all your internet pages far more simply. Make absolutely sure you have one-way links pointing to all your webpages and mark as favorites the most important webpages, especially from the dwelling web page.

If you explore discrepancies amongst the number of internet pages crawled and the quantity of web pages on your web page, determine out why. Impression resource: Creator

You can assess the range of web site webpages to the amount uncovered in Google to test for regularity. If your overall web page is not indexed, you have internal linking or website map issues. If too numerous internet pages are indexed, you might want to narrow the scope via robots.txt, canonical tags, or noindex tags. All superior? Let’s transfer to the following stage.

6. Proper damaged inbound links and redirections

Now that we have defined the scope of the crawl, we can right problems and imperfections. The initially mistake style is damaged hyperlinks. In basic principle, you shouldn’t have any if your web site is managed with a Articles Administration Procedure (CMS). Damaged one-way links are one-way links pointing to a page that no for a longer period exists. When a user or crawler clicks on the backlink, they conclusion up on a “404” web page, a server error code.

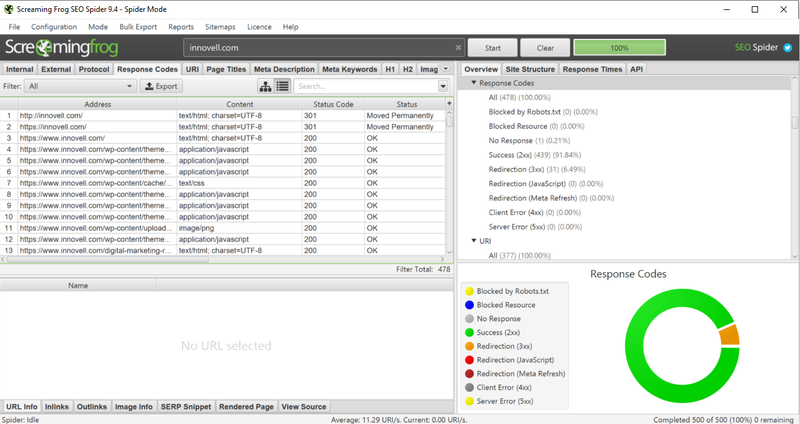

You can see the response codes created for the duration of a web page crawl with Seo resources. Image source: Author

We believe you you should not have any “500” error codes, crucial web page glitches that need to have to be mounted by the web site developer.

Following, we appear at redirections. Redirections slow down consumers and crawlers. They are a indicator of sloppy world wide web improvement or momentary fixes. It is great to have a limited quantity of “301” server codes, that means “Site permanently moved.” Pages with “404” codes are broken backlinks and need to be corrected.

7. Generate title and meta information and facts

Search engine marketing crawlers will recognize a amount of other difficulties, but lots of of them are outside the scope of specialized Seo. This is the situation for “titles,” “meta descriptions,” “meta keywords and phrases,” and “alt” tags. These are all part of your on-website page Website positioning. You can handle them later on considering the fact that they require a whole lot of editorial function associated to the written content somewhat than the construction of the site.

For the complex component of the internet site, identify any automated titles or descriptions that can be produced by your CMS, but this requires some programming.

One more difficulty you may well have to have to deal with is the site’s structured data, the extra information you can insert into webpages to go on to search engines. Again, most CMSes will integrate some structured knowledge, and for a tiny internet site, it may perhaps not make a lot of a distinction.

8. Optimize website page load velocity

The remaining crawl concern you should really seem at is site load speed. The spider will discover slow webpages, normally caused by major photos or javascripts. Website page speed is some thing research engines increasingly take into account for rating functions for the reason that they want conclude people to have an ideal working experience.

Google has developed a tool identified as PageSpeed Insights which tests your internet site pace and tends to make suggestions to velocity it up.

9. Validate your corrections: Recrawl, resubmit, and examine for indexation

Once you have created significant alterations dependent on obstacles or imperfections you encountered in this complex Web optimization run-as a result of, you must recrawl your web site to look at that corrections were applied properly:

- Redirection of non-www to www

- Redirection of http to https

- Noindex and canonical tags

- Broken one-way links and redirections

- Titles and descriptions

- Response periods

If everything seems very good, you can want the site to be indexed by research engines. This will come about automatically if you have a very little patience, but if you are in a hurry, you can resubmit important URLs.

Eventually, following everything has been crawled and indexed, you ought to be able to see the up to date internet pages in research engine final results.

You can also glance at the protection portion of Google Search Console to obtain specifics about which web pages have been crawled and which had been indexed.

3 very best practices when improving upon your complex Seo

Technological Website positioning can be mysterious, and it necessitates a ton of tolerance. Recall, this is the foundation for your Seo e

fficiency. Let us seem at a couple of points to hold in brain.

1. Really don’t emphasis on rapid wins

It really is common Search engine marketing apply to concentration on rapid wins: highest effect with most affordable exertion. This method could be valuable for prioritizing specialized Web optimization tasks for bigger web pages, but it is really not the finest way to solution a technical Web optimization challenge for a tiny website. You have to have to concentrate on the extended expression and get your technological basis appropriate.

2. Invest a little income

To increase your Search engine optimisation, you could want to commit a minor revenue. Purchase a area name if you never have a person, buy a protected server certification, use a paid out Search engine optimisation software, or get in touch with in a developer or webmaster. These are most likely marginal investments for a business, so you should not hesitate.

3. Inquire for guidance when you are blocked

In specialized Seo, it really is most effective not to improvise or check and find out. Anytime you are blocked, check with for support. Twitter is entire of fantastic and valuable Search engine marketing suggestions, and so are the WebmasterWorld boards. Even Google delivers webmaster business hrs periods.

Fix your Search engine optimization architecture at the time and for all

We’ve coated the critical components of specialized Website positioning enhancements you can have out on your site. Website positioning architecture is one particular of the a few pillars of Seo. It’s the basis of your Seo efficiency, and you can fix it the moment and for all.

An optimized architecture will gain all the work you do in the other pillars: all the new content you build and all the one way links you create. It can be tough and acquire time to get it all suitable, so get started off ideal absent. It’s the best thing you can do, and it pays off in the lengthy operate.